AI Governance Under the EU AI Act: Risk Classification and Compliance Readiness for 2026

This blog was originally posted on 12th February, 2026. Further regulatory developments may have occurred after publication. To keep up-to-date with the latest compliance news, sign up to our newsletter.

BASED ON AI RULES ARE CHANGING: KEY REGULATORY UPDATES FOR 2025 AND 2026, BY DILA ŞEN, SENIOR REGULATORY SPECIALIST, AND CHELSEA NÍ CHUINNEGÁIN, SENIOR REGULATORY COMPLIANCE SPECIALIST & HEAD OF KNOWLEDGE PARTNERS, COMPLIANCE & RISKS.

The regulation of artificial intelligence in Europe is entering a crucial phase. With the EU AI Act moving toward phased implementation in 2026, companies face a fundamental shift in the governance of AI systems, as well as their documentation and monitoring. But what is the impact of introducing AI governance obligations and risk assessment?

AI is no longer treated as a neutral technology. Therefore, it is now evaluated based on the level of risk it poses to security, fundamental rights, and social impacts. Compliance currently depends on understanding how each AI system is classified and governed throughout its lifecycle. This applies to organizations that develop, implement, or use AI in their processes.This article is based on our webinar AI Rules Are Changing: Key Regulatory Updates for 2025 and 2026, with our subject matter experts Dila Şen and Chelsea Ní Chuinneagáin. According to the topic discussed, we will explain how AI governance works according to the EU AI Act. What does risk classification mean in practice? How can companies prepare for compliance? Find out the answers below.

Why is AI Governance Now a Legal Requirement?

AI governance used to be largely voluntary, guided by ethical frameworks or internal best practices. The EU AI Act’s governance and risk classification changed that, introducing binding legal obligations that apply to all sectors and industries.

Under the regulation, companies must demonstrate that they:

- Identify and assess AI risks

- Assign accountability across the organization

- Maintain oversight throughout system deployment and use

Thus, AI governance is no longer limited to development teams. Legal, compliance, product, and risk areas have come to play a key role in ensuring that AI systems meet regulatory expectations.

The EU AI Act Risk-Based Framework Explained

Firstly, the main regulatory measure is a risk-based approach, which determines the level of regulatory control applied to an AI system. Every organization that uses AI must assess the extent to which its systems fall within this context.

Prohibited AI Practices

Therefore, certain AI practices are completely prohibited if they present unacceptable risks. For example, systems that manipulate behavior or allow certain forms of biometric surveillance. Consequently, the implementation of prohibited AI systems may result in immediate punitive measures.

High-Risk AI Systems

In the case of high-risk AI systems, they are permitted but heavily regulated and are typically used in sensitive areas such as:

- Employment and worker management

- Education and training

- Creditworthiness and access to services

- Safety components of regulated products

According to the EU AI Act’s governance and risk classification guidelines, high-risk AI systems must meet comprehensive requirements encompassing data governance, risk management, human oversight, and documentation.

Limited-Risk AI Systems

When discussing low-risk AI systems, these are primarily subject to transparency obligations. For example, they should inform users when AI is being used or when the content is generated by AI.

Minimal-Risk AI Systems

Most AI applications that fall into this category require minimal regulatory intervention, although voluntary codes of conduct are also encouraged.

Therefore, the EU AI Act’s governance and risk classification are mandatory and form the basis of compliance.

Which are the Governance Obligations Across the AI Lifecycle?

A fundamental principle of regulatory guidelines is that AI governance applies to its entire lifecycle, not just the development phase.

Thus, companies must manage compliance during:

- Design and development

- Data collection and training

- Deployment and operational use

- Post-market monitoring and updates

It is emphasized in our webinar that changes to an AI system—such as retraining models more than once or expanding functionalities—require a reassessment of its risk rating and compliance obligations.

Similarly, responsibility also falls on organizations that use third-party AI. Importers, distributors, and implementers may have legal obligations, depending on their role.

Documentation and Traceability Requirements

Transparent, reasoned, and organized documentation is a demanded aspect of AI Act compliance, particularly for high-risk AI systems.

Organizations are expected to maintain:

- Technical documentation explaining system purpose and design

- Data governance records for training and testing datasets

- Risk management documentation demonstrating mitigation measures

- Logs and records enabling traceability of AI outputs

So, these records must be kept up-to-date and made available to authorities upon request. Failure to comply with this requirement may result in a finding of non-compliance, even if the AI system is functioning as expected.

Human Oversight as a Core Compliance Principle

The EU AI Act’s governance and risk classification emphasizes that AI systems must remain under meaningful human control.

Therefore, companies must ensure the following for high-risk AI systems:

- Humans can interpret AI outputs

- Clear intervention or override mechanisms exist

- Personnel supervising AI systems are appropriately trained

This requirement has important implications, especially for highly automated decision-making systems. It requires organizations to reassess the degree of autonomy granted to AI tools.

Organizational Readiness and AI Governance Structures

According to our webinar, the governance and risk classification of the EU AI Act, AI compliance cannot be addressed in isolation. Companies facing greater difficulties with regulations generally lack:

- A centralized inventory of AI systems

- Clear ownership and accountability

- Integration between AI governance and enterprise risk management

On the other hand, more prepared organizations often:

- Maintain an internal AI register

- Align AI governance with existing compliance programs

- Involve legal, compliance, IT, and product teams early

The EU AI Act’s governance and risk classification effectively requires organizations to mature their internal governance structures around AI.

Timing, Enforcement, and Why Early Action Matters

Although some obligations are being implemented gradually, expectations of greater oversight are already taking hold. This is leading regulatory bodies to prioritize high-risk AI systems and sensitive use cases.

You must know that early preparation allows companies to:

- Identify AI systems that may require redesign

- Address supply chain compliance dependencies

- Avoid rushed remediation close to enforcement deadlines

Therefore, treating transition periods as preparation windows, rather than delays, is crucial for managing compliance risk.

Some Key Takeaways for Compliance and Risk Teams

- AI governance under the EU AI Act is mandatory

- Risk classification determines the scope of obligations

- Documentation and traceability are central compliance pillars

- Human oversight is required for high-risk AI systems

- Early governance planning reduces regulatory and operational risk

How to Build AI Governance for the Future?

The EU AI Act’s governance and risk classification marks a turning point in global AI regulation. Therefore, governance, accountability, and risk management become legal requirements.

Organizations are advised to invest early in AI governance frameworks, risk assessment processes, and cross-functional collaboration. This will better position them to meet compliance expectations, allowing them to continue innovating responsibly.

It’s important to understand that AI compliance is not just about avoiding penalties. It’s a measure to build trust, resilience, and long-term viability in an increasingly regulated AI landscape.

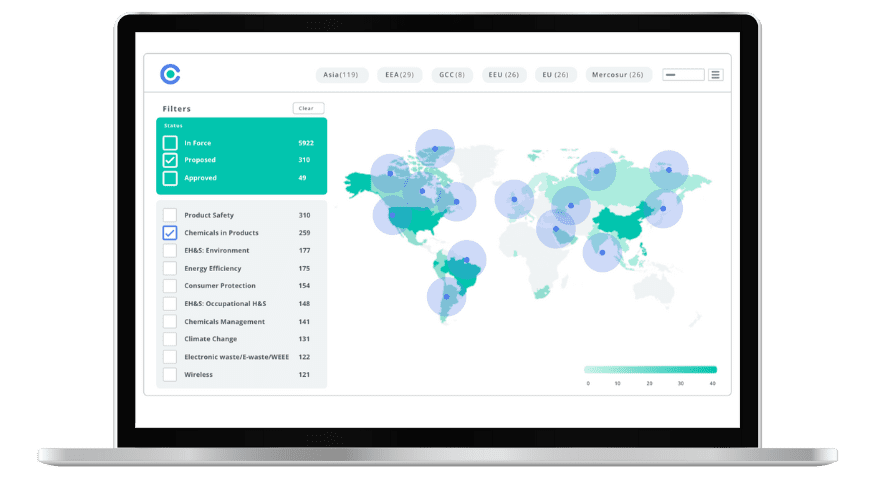

Simplify Corporate Sustainability Compliance

Six months of research, done in 60 seconds. Cut through ESG chaos and act with clarity. Try C&R Sustainability Free.