EU AI Act Compliance Requirements for Companies: What to Prepare for 2026

This blog was originally posted on 3rd February, 2026. Further regulatory developments may have occurred after publication. To keep up-to-date with the latest compliance news, sign up to our newsletter.

BASED ON AI RULES ARE CHANGING: KEY REGULATORY UPDATES FOR 2025 AND 2026, BY DILA ŞEN, SENIOR REGULATORY SPECIALIST, AND CHELSEA NÍ CHUINNEGÁIN, SENIOR REGULATORY COMPLIANCE SPECIALIST & HEAD OF KNOWLEDGE PARTNERS, COMPLIANCE & RISKS.

A new phase for AI regulation is starting. The adoption of the EU Artificial Intelligence Act (EU AI Act) is making businesses that develop, deploy, or use AI systems face an organized and enforceable regulatory framework for the first time. Compliance requirements of the EU AI Act must apply not just to EU-based companies but also to the ones whose AI systems are related to the EU market.

This way, they should be more than aware of new compliance measures and structure a proper planning for them during the year of 2026. This article explains how the EU AI Act works, which AI systems are affected, and what companies need to do now to remain compliant and mitigate regulatory risk.

What Are the EU AI Act Compliance Requirements for Companies?

EU AI Act compliance requirements for businesses are legally binding AI systems based on the level of risk they pose to fundamental rights, safety, and public interests. Companies have obligations depending on their role in the AI value chain—such as provider, deployer, importer, or distributor—and the risk classification of their AI system.

Compliance requirements for companies range from transparency disclosures for limited-risk systems to extensive governance, documentation, and monitoring obligations for high-risk AI systems. If they fail to comply, it can result in fines and product bans, besides a bad reputation.

Why the EU AI Act Matters for Global Businesses?

The EU AI Act goes beyond the countries’ members. Any company that places AI systems on the EU market or uses AI outputs within the EU must comply, no matter where they are established. This way, the regulation is highly relevant for multinational technology providers, SaaS platforms, manufacturers using AI-enabled components, and enterprises deploying AI internally for decision-making.

The Act also aligns with existing EU product safety, data protection, and consumer protection frameworks. This means AI compliance is no longer just a technical matter—it must be set into product governance and risk management processes.

Risk-Based Classification Under the EU AI Act

A risk-based approach is a central feature of the EU AI Act compliance for companies. Regarding this, AI systems are categorized into four main groups:

- Unacceptable-risk AI, prohibited outright.

- High-risk AI is permitted but subject to strict compliance obligations.

- Limited-risk AI, requiring transparency measures.

- Minimal-risk AI, which remains largely unregulated.

AI systems must be correctly classified to the foundation of compliance, since it determines the requirements that should be applied and the enforcement actions regulators may take.

Which Prohibited AI Practices Companies Must Avoid?

Based on EU fundamental values and rights, the EU AI Act prohibits certain AI practices, such as AI systems that manipulate human behavior in a harmful way, exploit vulnerabilities of specific groups, or allow social evaluation by public authorities.

It is mandatory for companies to ensure that none of their AI-enabled features, analytics tools, or decision-making systems fall into these prohibited categories. Even indirect support for prohibited uses—such as supplying the components that enable them—can expose companies to the risk of sanctions.

High-Risk AI Systems: Core Compliance Requirements

The EU AI Act compliance requirements for companies establish strict measures for high-risk AI systems. These systems are often related to regulated or sensitive contexts, such as employment, credit assessment, biometric identification, healthcare, education, and security-related product functions.

The compliance obligations for companies regarding high-risk AI include:

- Implementing a risk management system.

- Ensuring high-quality and representative training data.

- Establishing human oversight mechanisms.

- Maintaining detailed technical documentation.

The demonstration of accuracy, robustness, and cybersecurity throughout the system lifecycle is mandatory. High-risk AI systems typically require a compliance assessment before being commercialized. Therefore, companies should prepare for post-market surveillance, and corrective actions should be taken if risks arise.

Transparency Obligations for AI Systems

Although AI systems are not classified as high-risk, companies may still have transparency obligations. Users need to be informed, when interacting with an AI system, whether the content was generated or manipulated by AI or whether biometric categorization was involved.

These requirements are directly linked to the contexts of user interfaces, labeling, product documentation, and customer communication. Companies are obligated to ensure transparent and consistent measures that are incorporated into product design and not treated as an afterthought.

Generative AI and General-Purpose AI Compliance

SingThe EU AI Act requirements for companies impose specific obligations on general-purpose AI models and generative AI. While these systems are not classified as high-risk, vendors must comply with transparency requirements, copyright safeguards, and risk mitigation measures.

If the business uses or integrates generative AI, assessing subsequent risks is mandatory. Even if a model provider assumes certain obligations, deployers remain responsible for how the AI results are used. Thus, contractual clarity and supplier due diligence become essential components of compliance.

EU AI Act Timelines and Enforcement 2026

EU AI compliance for companies is being implemented in stages. Bans on AI that poses an unacceptable risk will be applied first, followed by governance and transparency obligations. As a final step, the full implementation of requirements for high-risk AI will come into effect.

Significant compliance obligations will come into effect for companies during 2026. Regulatory authorities may impose fines and restrict market access, as well as require product recalls. Therefore, advance planning is essential to avoid the negative impact of regulatory pressure.

Which are the Penalties and Business Risks of Non-Compliance?

Failure to comply with the EU AI Act can result in administrative fines of up to €35 million or a percentage of the company’s global annual turnover, depending on the severity of the infringement. In addition to financial penalties, companies may also face operational disruptions, product launch delays, import bans, and damage to their reputation with customers.

This way, there is increasing coordination between AI regulators, data protection authorities, and market watchdogs. Therefore, enforcement actions are likely to be visible and damaging to reputation. Thus, compliance should be seen as a strategic priority for risk management.

EU AI Act Compliance Checklist for Companies

EU AI compliance for companies establishes a structured and documented program. This means:

- Start by creating a comprehensive inventory of all AI systems developed, procured, or deployed.

- Include their use cases and geographic reach.

- Determine applicable obligations to each system against EU AI Act risk categories.

- Evaluate whether any AI functionality could be considered prohibited and redesign or discontinue those uses.

- Implement formal risk management processes, data governance controls, human oversight procedures, and technical documentation frameworks for high-risk AI systems.

- Integrate transparency obligations into product interfaces and communications.

- Make supplier and contract management processes clear.

- Allocate AI compliance responsibilities across the value chain.

- Establish post-market monitoring, incident reporting, and internal training programs to ensure ongoing compliance as systems evolve.

How Companies Should Start Preparing?

Preparation for compliance with the EU AI Act should begin well before the effective dates. Aligning legal, compliance, product, and engineering teams around a common understanding of AI risks and regulatory expectations is essential for businesses.

So, the risk of future sanctions can be significantly reduced through the development of internal AI governance policies, conducting gap assessments, and incorporating compliance into product development cycles. Continuous and scalable monitoring of regulatory guidelines as well as the implementation of compliance processes become essential as AI regulation continues to evolve globally.

Turning EU AI Act Compliance into a Competitive Advantage

The EU AI compliance requirements for companies mark a turning point in how AI is governed and deployed. While the regulation introduces new obligations and risks, it also provides clarity and consistency across the EU market.

Thus, businesses have a mission to invest early in AI compliance, transparency, and responsible governance. This allows them to achieve a stronger market position, maintain market access, build customer trust, and sustainably scale AI innovation. With implementation deadlines approaching, now is the time to move from awareness to action.

To learn more, check out our webinar on the topic: AI Rules Are Changing: Key Regulatory Updates for 2025 and 2026, with our subject matter experts Dila Şen and Chelsea Ní Chuinneagáin.

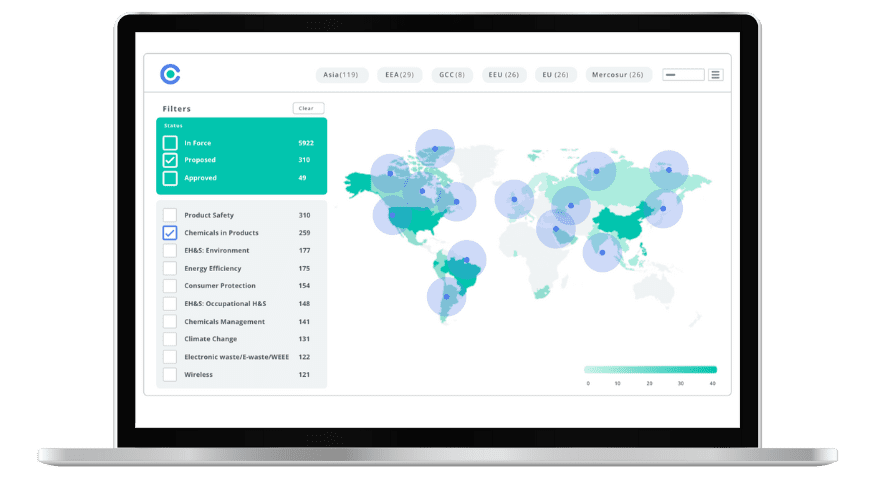

Simplify Corporate Sustainability Compliance

Six months of research, done in 60 seconds. Cut through ESG chaos and act with clarity. Try C&R Sustainability Free.