William Fry AI Summit 2026: Trust, Governance and the Future of AI in Europe

This blog was originally posted on 15th May, 2026. Further regulatory developments may have occurred after publication. To keep up-to-date with the latest compliance news, sign up to our newsletter.

AUTHORED BY JOANNE O’DONNELL, HEAD OF GLOBAL REGULATORY COMPLIANCE TEAM AND PRINCIPAL SUBJECT MATTER EXPERT; ANI NOZADZE, SENIOR REGULATORY COMPLIANCE SPECIALIST, TEAM LEAD, AND PRINCIPAL SUBJECT MATTER EXPERT; COMPLIANCE & RISKS

Leading Irish law firm, William Fry’s Third Annual AI Summit held on 14 May in Dublin brought together regulators, policymakers, technology leaders and enterprise executives to discuss one of the defining issues of our time: how Europe can harness the transformative power of Artificial Intelligence while maintaining trust, accountability and innovation. Joanne O’Donnell, Head of Global Regulatory Compliance Team, and Ani Nozadze, Senior Regulatory Compliance Specialist and Team Lead at Compliance & Risks, were among the invited attendees and have summarised the key takeaways from the Summit discussions.

Across four insightful panels, one message emerged consistently – Ireland is positioning itself at the centre of AI governance, AI innovation and AI investment within Europe. From the establishment of Ireland’s new AI Office to discussions on enterprise AI implementation, AI procurement and the geopolitical future of AI regulation, the summit highlighted both the enormous opportunity and the significant responsibility facing organizations deploying AI technologies today.

Table of Contents

- Building Ireland’s AI Regulatory Architecture: Trust as the Foundation of AI Innovation

- From Experimentation to Enterprise AI: The C-Suite Perspectives

- Procuring AI Responsibly: Contracts, Data and Vendor Trust

- Geopolitics, Digital Sovereignty and Europe’s AI Future

- Final Reflections: Ireland at the Centre of Europe’s AI Future

Building Ireland’s AI Regulatory Architecture: Trust as the Foundation of AI Innovation

The opening panel, “Regulating AI in Ireland: Emerging Supervisory Architecture” moderated by Rachel Hayes of William Fry, explored how Ireland is preparing for implementation of the EU AI Act and the broader European digital regulatory framework.

Jean Carberry from the Irish Department of Enterprise, Tourism and Employment spoke about the establishment of the AI Office of Ireland, describing it as the “conductor of the orchestra” responsible for ensuring a coherent national approach to AI governance. The Office will act as a central point of contact for citizens, government and the EU while also promoting innovation through regulatory sandboxes designed to support responsible AI development. However, other than the AI Office, 13 existing sectoral authorities have been designated to supervise AI systems within their specific domains.

A key takeaway from the discussion was that the EU AI Act is based on a risk-based framework and while most AI systems will not fall into the “high-risk” category, transparency, accountability and governance obligations will apply broadly across most organisations deploying AI technologies.

Panellists repeatedly emphasised that regulation should not be viewed as a barrier to innovation. Instead, trusted AI systems and clear regulatory frameworks are increasingly becoming competitive advantages. Dale Sunderland of the Irish Data Protection Commission highlighted the importance of striking a balance between innovation and regulation, noting that the eyes of Europe and beyond are watching Ireland, particularly as the country gears up to take on the Presidency of the EU Council in July – a recurring theme throughout the summit.

The panel also highlighted several emerging challenges:

- AI systems typically evolve dynamically after deployment which means that regulatory compliance cannot be viewed as a once-off static process but must be developed to align with technological advancements.

- The rapid pace of AI capability development is outstripping existing evaluation and oversight methodologies, thereby posing additional challenges for companies.

- Regulators themselves require significant technical expertise and resourcing to effectively supervise AI ecosystems.

- Greater cooperation between regulators will be essential to identify synergies between existing regulations where overlapping responsibilities between the AI Act, GDPR, financial services regulation and digital safety legislation.

One particularly important message for businesses was that AI compliance cannot remain solely a legal or compliance-team exercise. Governance, oversight, monitoring and ethical AI principles must become embedded across organisations. As one speaker noted, “If you see regulation as a compliance exercise only, this will be a challenging environment.”

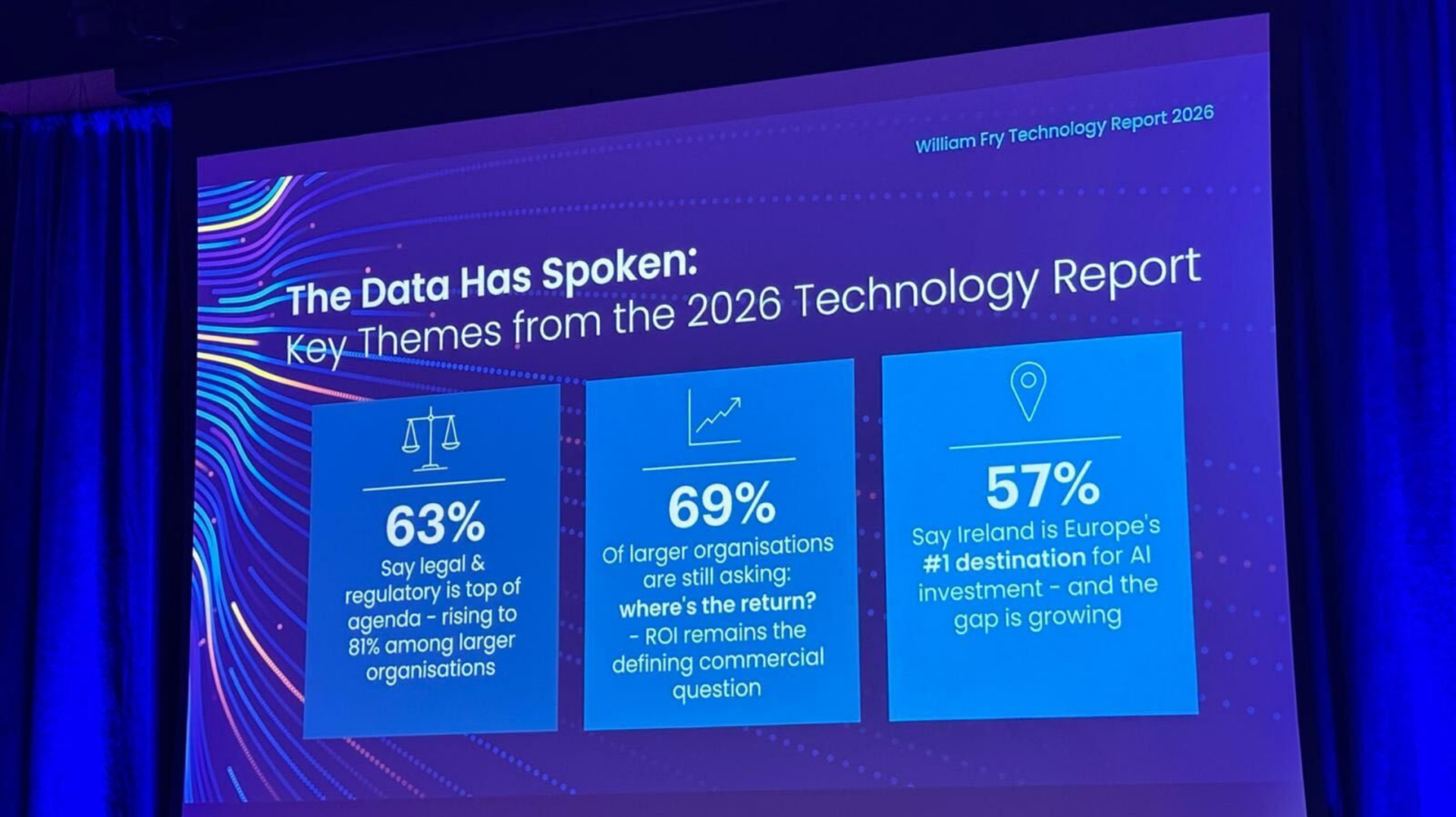

William Fry Technology Report 2026

The accompanying William Fry Technology Report, published to coincide with the Summit and informed by a March 2026 survey of 400 Irish companies, reinforced this theme by identifying legal and regulatory compliance as a critical enabler of successful AI deployment. Notably, 81% of larger companies in the survey identified legal and regulatory requirements as their primary consideration when adopting new technologies. In contrast, 55% of surveyed companies reported limited awareness of EU data and technology regulations, indicating that regulatory understanding continues to present a significant challenge for smaller and mid-sized businesses. Significantly, the survey also positioned Ireland as Europe’s leading destination for AI investment, highlighting its role as a bridge between US-led AI innovation and EU regulatory standards.

From Experimentation to Enterprise AI: The C-Suite Perspectives

The second panel, moderated by Maire O’Neil of William Fry, focused on how organisations are operationalising AI and moving from experimentation to scalable enterprise deployment. A recurring theme was that AI is no longer an isolated technology discussion confined to innovation teams – it is now firmly a boardroom priority.

Kieran McCorry of Microsoft observed that many organisations have moved beyond curiosity and are now asking practical questions around operationalisation, scalability and measurable return on investment (ROI).

Nathan Cullen of IBM outlined a clear maturity pathway for enterprise AI adoption:

- Establish trusted, accurate and aligned data foundations.

- Identify scalable workflows that operate horizontally across the organisation.

- Only then select AI technologies capable of supporting enterprise-wide deployment.

The discussion strongly reinforced that successful AI transformation is less about purchasing tools and more about organisational readiness.

Several themes emerged repeatedly:

- AI governance and guardrails are becoming critical enterprise capabilities.

- Human oversight remains essential in high-impact decision-making environments.

- Executive sponsorship is necessary for long-term adoption.

- AI deployment strategies must scale across the organisation rather than remain siloed within departments.

The panel also highlighted the growing importance of benchmarking and AI readiness assessments as companies seek to understand what “good” looks like within their own industries. One of the strongest observations from this discussion was that organisations under pressure to demonstrate rapid AI value creation may risk deploying solutions before appropriate governance structures are fully established. The conclusion was that organisations likely to succeed in the AI economy will be those doing the foundational work now – governance, trust, data quality, oversight and workforce readiness.

Procuring AI Responsibly: Contracts, Data and Vendor Trust

Panel three, moderated by Leo Moore of William Fry, explored the increasingly complex challenge of procuring AI systems in a rapidly evolving regulatory and technological environment and comprised AI builders, buyers and content owners.

The discussion highlighted that AI procurement is fundamentally different from traditional technology procurement because organisations are often buying systems that continuously evolve after deployment.

Key concerns included:

- Vendor lock-in risks given the pace of AI advancements

- Interoperability between AI systems

- Transparency around model updates

- Auditability and traceability

- Intellectual property protections

- Data quality and lawful data usage

- Measuring AI adoption and value creation

One particularly compelling insight was that the maturity challenge may lie less with the AI tools themselves and more with organisational maturity in using them effectively.

When discussing AI adoption, the panellists highlighted the importance of measuring success not only by focusing Return on Investment (ROI), but also by checking whether the teams are actually using it and how satisfied they are while using it, e.g., through Employee Net Promoter Score (eNPS). It was also highlighted that for value creation, employees should be trained so that they make full use of the technology offered and available to them.

Panellists repeatedly stressed the importance of trust in the data that feeds AI. As one speaker noted: “Garbage in, garbage out.” High-quality, compliant and trustworthy data was described as the true intelligence layer underpinning successful AI systems. Obtaining compliant data also includes verification of copyrighted materials, as interaction of intellectual property and AI is becoming increasingly important in both regulatory and commercial discussions. This is especially topical in light of the upcoming review of the EU Copyright Directive.

The discussion also highlighted how AI procurement contracts must evolve to address:

- Ongoing model changes

- Dynamic risk classifications

- Transparency obligations

- Fairness and bias monitoring

- Audit rights

- Exit clauses

- Shared accountability between vendors and buyers

Importantly, procurement was framed not simply as a contractual exercise but as a long-term partnership model. Organisations increasingly need AI vendors who are invested in adoption success, governance alignment and ongoing compliance support – not merely software providers.

Geopolitics, Digital Sovereignty and Europe’s AI Future

The keynote speech and the final panel discussion, moderated by Barry Scannell of Wiliam Fry, examined how Artificial Intelligence is reshaping geopolitics, digital sovereignty and Europe’s strategic future.

European Commissioner for Democracy, Justice, the Rule of Law and Consumer Protection, Michael McGrath delivered a strong message that Europe must not become merely a passive consumer of AI technologies developed elsewhere. Instead, the EU’s human-centric and rights-based approach to AI governance was presented as a strategic advantage capable of shaping global standards for trustworthy AI.

A central theme throughout the discussion was that trust will become one of the most valuable currencies in the global digital economy.

Topics discussed included:

- AI-driven disinformation and deepfakes

- Safeguarding democratic integrity

- Protecting digital sovereignty

- Protecting vulnerable groups, such as children

- Harmonisation of EU digital regulations

- Simplification of overlapping regulatory obligations

- Europe’s competitiveness in AI innovation

- The risk of European start-ups relocating outside the EU to grow and scale faster

Several speakers challenged the simplistic narrative of “innovation versus regulation”, concluding that they are not mutually exclusive; however, also arguing that clarity, predictability and harmonisation is necessary to foster stronger innovation ecosystems.

The forthcoming “EU Inc.” proposal – described by the Commissioner as a potentially transformative overhaul of EU company law – was highlighted as an important initiative designed to reduce fragmentation and make Europe a more attractive environment for scaling AI businesses.

Malcolm Byrne, Chair of the Irish Oireachtas Joint Committee on AI, emphasised that Artificial Intelligence should be viewed not as an end in itself, but as a powerful enabler of societal and economic progress, jokingly framing Europe’s AI opportunity as “MECA” – “Make Europe Competitive Again” – a clever nod to Donald Trump’s well-known MAGA acronym.

The discussion also reinforced the importance of “responsibility by design” – embedding ethical AI principles into products, organisations and public institutions from the outset.

One particularly striking statistic shared during the panel was that 68% of companies still do not fully understand their AI obligations under emerging regulatory frameworks. The gap between AI adoption and AI governance readiness may therefore ultimately define which organisations thrive in the next phase of digital transformation.

Final Reflections: Ireland at the Centre of Europe’s AI Future

The William Fry AI Summit reinforced that Ireland is uniquely positioned at the intersection of AI innovation, AI regulation and digital governance, but that long-term success in AI will depend on far more than technological capability alone.

Across the discussions, three interconnected themes consistently emerged: trust, governance and innovation. A particular focus was placed on the importance of trustworthy data, human oversight and transparent AI systems that organisations can confidently operationalise at scale.

For businesses deploying AI, the challenge is no longer simply adopting the technology – it is ensuring that the data powering AI systems is accurate, current, explainable and governed responsibly. As several panellists noted, human oversight remains critical in high-impact decision-making environments, particularly where AI outputs influence regulatory, legal or operational outcomes.

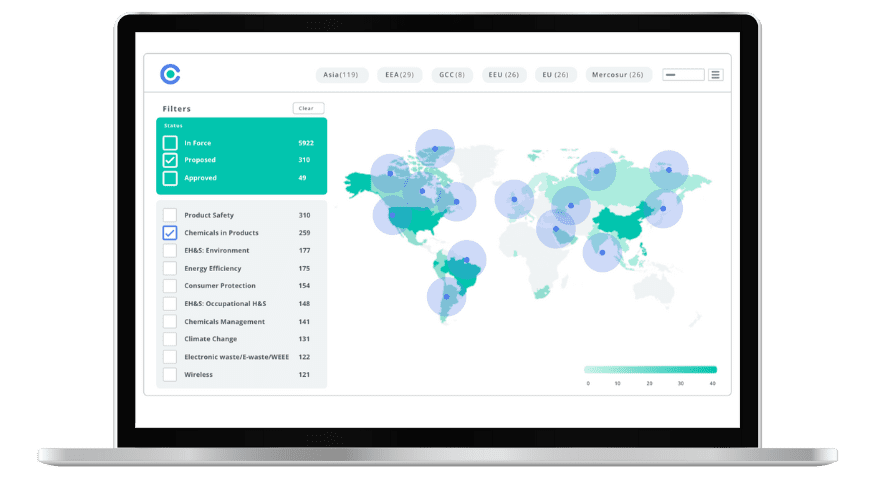

This is especially relevant in the context of AI-native regulatory intelligence platforms, where the value of Artificial Intelligence is directly linked to the quality, integrity and traceability of the underlying regulatory data. In highly regulated sectors, organisations increasingly need AI systems that do not operate as “black boxes”, but instead deliver trusted, transparent and actionable intelligence supported by strong governance frameworks.

The message from regulators, policymakers and industry leaders was clear: the future of AI in Europe will be shaped by organisations that can combine innovation with trustworthy data, responsible AI governance and meaningful human oversight.

As Europe continues shaping the global conversation on AI governance and trustworthy AI, Ireland appears well positioned to play a leading role in defining how AI can be deployed responsibly to support smarter, faster and more compliant decision-making.

A New Era of Product Cybersecurity: 2026 Global Updates and Compliance Strategies

This whitepaper explores how governments are strengthening requirements for connected products.