AI Liability and Product Safety: Emerging Frameworks for AI-Enabled Products

This blog was originally posted on 24th March, 2026. Further regulatory developments may have occurred after publication. To keep up-to-date with the latest compliance news, sign up to our newsletter.

AUTHORED BY SAMANTHA ANGUIANO, REGULATORY COMPLIANCE SPECIALIST, COMPLIANCE & RISKS

Key Insight

Regulatory frameworks are increasingly adapting product liability rules to explicitly include AI-enabled products and software-driven systems. Rather than replacing existing regimes, legislators are extending traditional liability principles while introducing targeted updates to address lifecycle control, technical complexity, and digital risks.

Introduction

Regulatory approaches to product liability are evolving as legislators address risks associated with products incorporating artificial intelligence functionalities and software-driven components.

Recent developments across jurisdictions reflect not only a clarification of how existing product safety and liability frameworks apply to AI-enabled products, but also the introduction of targeted substantive updates to address the unique characteristics of software-driven and autonomous systems.

In the European Union, Directive (EU) 2024/2853 introduced a foundational update by explicitly extending product liability rules to software and digital elements. This approach is now being reflected in national implementation measures across Member States.

Rather than creating entirely separate regimes in all cases, many frameworks build on traditional product liability principles while expanding their scope to digital elements, lifecycle control, and complex system behaviour.

Want to find out more about global AI regulations? Check out our webinar-on-demand ‘AI Rules Are Changing: Key Regulatory Updates for 2025 & 2026’.

How Are AI-Enabled Products Being Brought Within Product Liability Frameworks?

A key development is the explicit recognition that software and digital elements, including AI functionalities embedded in products, fall within the scope of product liability regimes.

Under Directive (EU) 2024/2853, software – regardless of how it is supplied – is included within the definition of a product. This encompasses standalone software, embedded systems, digital manufacturing files, and related digital services integrated into physical products. National implementation measures, including those proposed in jurisdictions such as Denmark, Germany, and the Netherlands, reflect this expanded scope.

Importantly, liability extends to harm caused by defective digital elements, including situations involving:

- Software updates and upgrades;

- Failures to provide necessary updates;

- Machine learning–driven system evolution; and

- Cybersecurity vulnerabilities affecting product safety.

These frameworks also recognise that manufacturers may retain control over products after they are placed on the market, particularly through updates or connected services. This ongoing control is now a relevant factor in assessing defectiveness.

At the same time, certain limitations apply. For example, non-commercial open-source software may fall outside the scope of product liability regimes in the EU.

How Are the U.S. Proposals Addressing Liability for AI-Enabled Products?

Legislative developments in the United States reflect a more fragmented but increasingly specific approach, with several proposals establishing dedicated liability regimes for AI systems.

In Illinois (Senate Bill 3590) and Maryland (House Bill 712), proposed frameworks establish direct liability for developers and, in certain circumstances, deployers, for harm caused by defective AI-enabled products. These proposals align liability with established product defect categories, including:

- Defective design;

- Failure to provide adequate warnings or instructions; and

- Breach of express warranty.

In addition, some proposals introduce presumptions linked to testing, validation, and risk management practices, which may influence how defectiveness is assessed in cases involving complex software-driven systems.

In Georgia (Senate Bill 488), proposed amendments focus specifically on generative AI systems in the context of product liability actions involving minors. The bill provides that such systems may be treated as personal property for liability purposes and introduces a rebuttable presumption relating to the duty to warn, particularly where product sellers are involved.

At the federal level, the proposed AI LEAD Act (Senate Bill 2937) seeks to establish a baseline liability framework for artificial intelligence products, including provisions on enforcement and limitations on contractual liability waivers.

Taken together, these proposals reflect both clarification and the creation of AI-specific liability frameworks, as well as a broader effort to define how responsibility is allocated across the product lifecycle.

What Types of Defects Are Being Considered in AI-Enabled Products?

Across both EU and U.S. developments, liability remains anchored in established product defect concepts, but these are being adapted to reflect digital and autonomous characteristics.

U.S. proposals consistently rely on:

- Defective design;

- Failure to warn or instruct;

- Unsafe or harmful product performance.

Within the EU framework, defectiveness continues to be assessed based on the level of safety that the public is entitled to expect. However, this assessment now explicitly incorporates factors specific to software-driven systems, including:

- The ability of systems to evolve through updates or learning;

- Interactions with other connected products or services;

- Cybersecurity risks impacting safe operation;

- The manufacturer’s ongoing control over the product.

Additionally, liability may extend to damage to data, alongside traditional categories such as personal injury and property damage.

How Are Regulators Addressing Evidence and Technical Complexity?

The technical complexity of AI-enabled products has led regulators to introduce mechanisms aimed at facilitating claims and addressing evidentiary asymmetries.

Under Directive (EU) 2024/2853 and its national implementations, courts may order disclosure of relevant evidence held by defendants. The framework also introduces rebuttable presumptions regarding defectiveness and causation, particularly where claimants face disproportionate difficulties due to scientific or technical complexity.

Similarly, some U.S. proposals – particularly at the state level – introduce presumptions linked to testing, validation, and risk management practices. In certain cases, adherence to such practices may support a presumption that a system is not defective, while failures may weigh in favour of liability.

These developments reflect a broader recognition that traditional evidentiary standards require adjustment when applied to opaque, adaptive, and data-driven systems.

What Trends Are Emerging in AI Liability and Product Safety?

Recent developments across jurisdictions reveal several consistent trends:

- Software and digital elements are firmly integrated into product liability frameworks, including standalone and embedded systems;

- Existing liability principles are being extended rather than replaced, but with significant substantive updates;

- AI-specific legislative regimes are emerging, particularly in the United States;

- Liability increasingly reflects the full product lifecycle, including post-market updates and ongoing control;

- Product safety assessments now explicitly incorporate cybersecurity, system evolution, and interoperability risks;

- New evidentiary tools and presumptions are being introduced to address technical complexity and information asymmetry.

Overall, regulatory frameworks are evolving toward a model that retains the core structure of product liability law while adapting it to the realities of software-driven and AI-enabled products. This evolution reflects both continuity and substantive legal innovation in response to emerging technological risks.

For further information on AI legislation across the globe, check out our guide ‘AI Rules Are Changing: Strategic Insights To Market Access and Mandatory Compliance in 2026‘.

Stay Ahead Of Regulatory Changes in AI

Want to stay ahead of regulatory developments in AI?

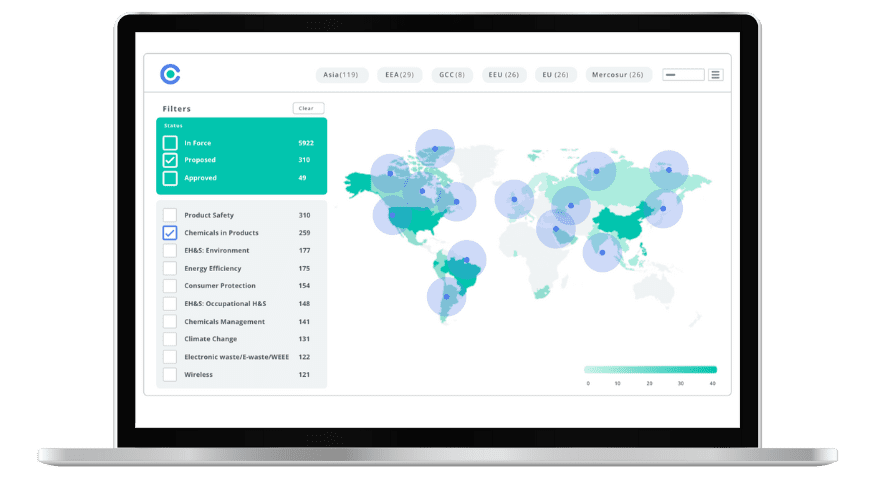

Accelerate your ability to achieve, maintain & expand market access for all products in global markets with C2P – your key to unlocking market access, trusted by more than 300 of the world’s leading brands.

C2P is an enterprise SaaS platform providing everything you need in one place to achieve your business objectives by proving compliance in over 195 countries.

C2P is purpose-built to be tailored to your specific needs with comprehensive capabilities that enable enterprise-wide management of regulations, standards, requirements and evidence.

Add-on packages help accelerate market access through use-case-specific solutions, global regulatory content, a global team of subject matter experts and professional services.

- Accelerate time-to-market for products

- Reduce non-compliance risks that impact your ability to meet business goals and cause reputational damage

- Enable business continuity by digitizing your compliance process and building corporate memory

- Improve efficiency and enable your team to focus on business critical initiatives rather than manual tasks

- Save time with access to Compliance & Risks’ extensive Knowledge Partner network

Simplify Corporate Sustainability Compliance

Six months of research, done in 60 seconds. Cut through ESG chaos and act with clarity. Try C&R Sustainability Free.